It is late, and you have just ordered your favorite dessert from a new find, one of those places you almost want to gatekeep because it feels like you discovered it before anyone else did. Within a minute of placing the order, you realize you are not going to stay awake long enough for it to arrive, so you try cancelling while it is still sitting there, unaccepted, untouched, very much reversible.

Instead, you are routed to an Agentic AI system, with a small disclaimer sitting at the top that says, ‘responses may not always be accurate,’ which, in hindsight, is doing a lot more work than it appears to. You explain the situation clearly, expecting this to be straightforward. The response comes back instantly, confident in a way that feels almost misplaced, informing you that you are eligible for a refund of a big, fat ₹0.

At that point, you are both half-asleep and completely awake, trying to figure out how something so obviously fixable has already been decided for you. You ask for a human agent, wait through a few minutes that feel longer than they should, go back and forth without anything actually changing, and somewhere in that loop you stop trying to cancel the order and start accepting that you are now staying up for it.

What changes in that moment is not the dessert, or even the money. It is your agency, your proverbial ability to step in and alter a decision that, by any reasonable standard, had not yet fully happened. This is the defining friction of our current moment with agentic AI: it does not fail loudly.

Where the Agentic AI Takes Over the Narrative

The easiest way to misunderstand what just happened is to assume the system failed to understand you. It did understand you, just not in the way you think.

What you expressed was user intent. What the system processed was a structured request that had to pass through a set of predefined conditions. Somewhere between those two, your request stopped being “cancel this, nothing has happened yet” and became “does this request qualify under allowed cancellation states.” Once that shift happens, the outcome of autonomous AI decision making is no longer about reasoning; it is about validation.

This is the part most people miss because the agentic AI system still feels responsive. It answers quickly, it sounds coherent, it even appears helpful. But it is operating inside a frame where your version of reality does not exist unless it can be mapped to something it already knows.

Decisions as Intersections, Not Intent

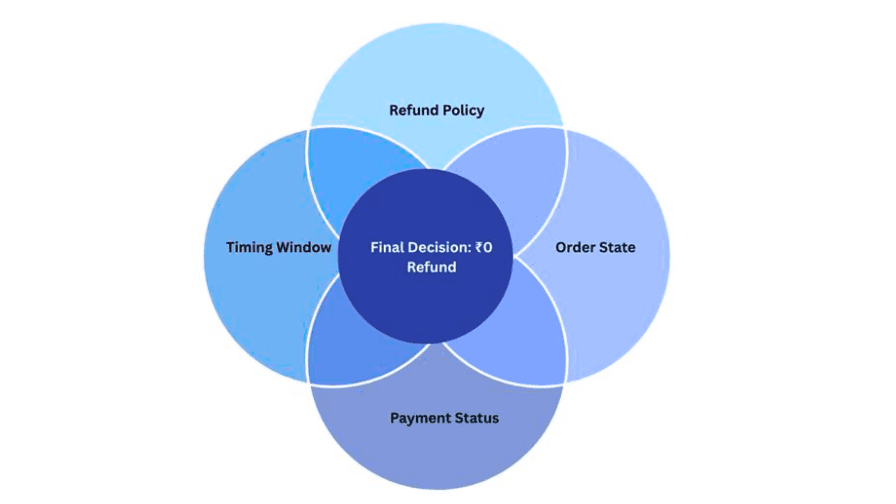

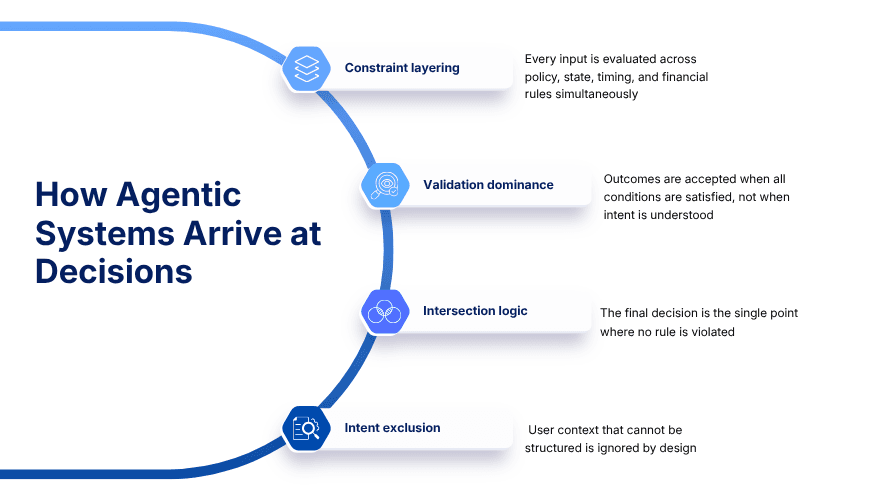

There is a useful way to think about how these systems arrive at decisions, and it does not come from software engineering as much as it does from geometry.

In mathematics, a “pencil of circles” refers to a family of circles that all satisfy a shared condition. When multiple such circles overlap, there are one or two points where all of them intersect. Those points are not chosen because they are meaningful in isolation. They are chosen because they satisfy every constraint simultaneously.

This is surprisingly close to how modern AI agents make decisions. Each rule, policy, model output, or constraint behaves like one of those circles. Refund policy is one. Order state is another. Payment status, fraud checks, timing windows, each adds another layer. The system does not evaluate your intent across these. It looks for the intersection, the single point where all constraints are satisfied without violation.

If that intersection happens to be a “₹0 refund,” then that is not a mistake from the system’s perspective. It is the only point where all its internal conditions overlap cleanly. Autonomous decision making of this kind is not broken. It is, by design, indifferent to what you actually meant.

Finance Systems Have Been Doing This for Years

If this feels abstract, finance systems make it very concrete.

In Accounts Payable, an invoice is not “understood.” It is validated across multiple overlapping constraints. Does it match the purchase order. Does it align with goods receipt. Is the price within tolerance. Is the vendor recognized. Each of these is its own circle, and the system is looking for the intersection where all of them agree.

- Three-way match: Intersection of invoice, PO, and receipt data defines validity, not the actual service experience

- Tolerance thresholds: Expand the intersection zone so minor mismatches still pass through

- Auto-approval logic: Moves anything within that intersection forward without revisiting context

- Exception handling: Anything outside the overlap is pushed out, often without enough context to resolve

On the Accounts Receivable side, the same logic applies in reverse.

- Auto cash application: Payment is matched where amount, reference, and timing intersect

- Short payment logic: Differences are classified based on what fits predefined categories

- Remittance parsing: Free-form inputs are forced into structured intersections

- Dispute workflows: Issues are routed based on classification, not necessarily resolution

The system is not asking what the transaction means. It is asking where it fits. And this distinction, between meaning and fit is where AI automation accountability starts to become genuinely difficult.

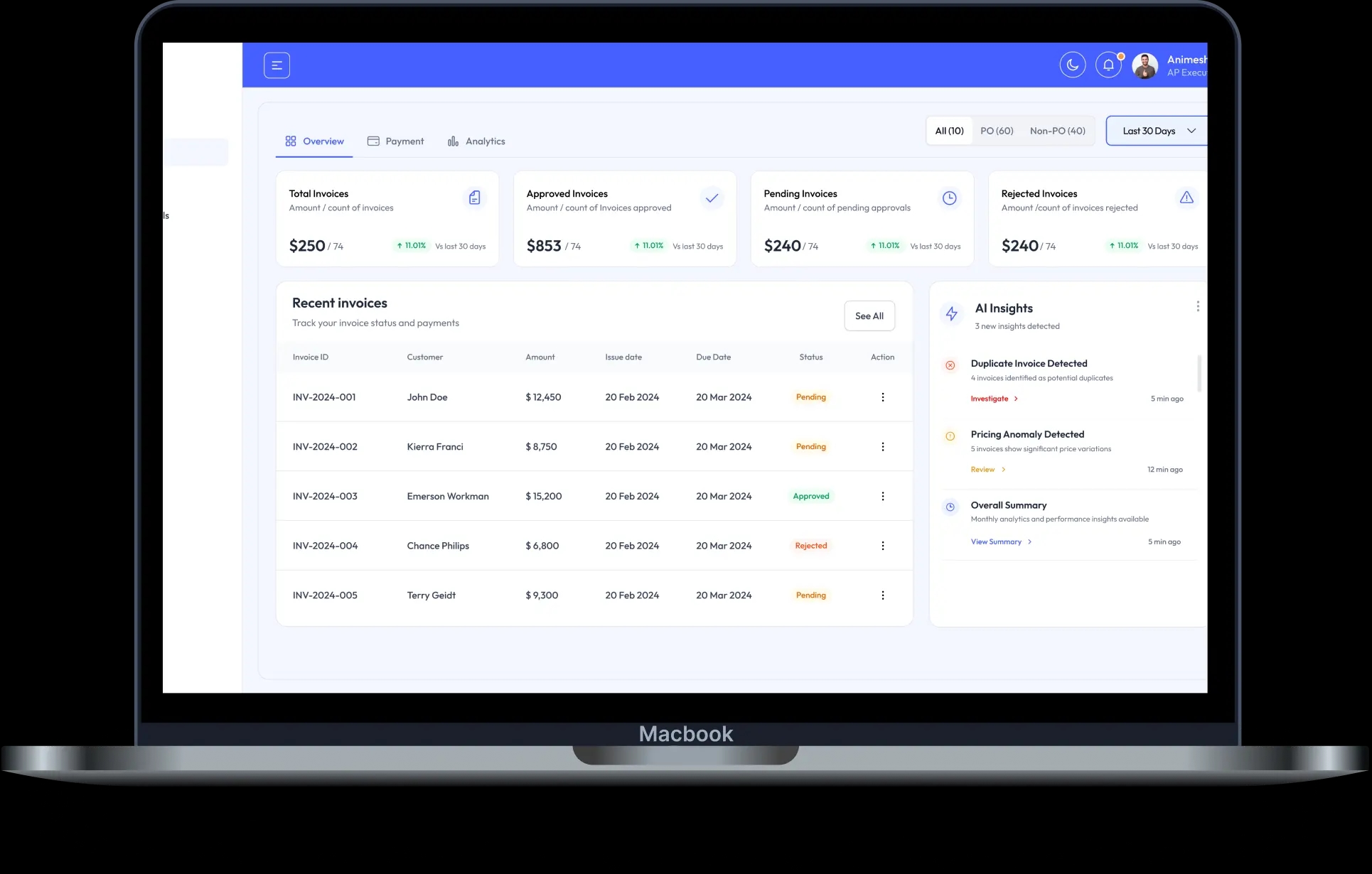

Neil, our AI Co-Worker for AP is designed with this challenge in mind, building accounts payable automation that does not just process invoices in a vacuum, but creates space for exception handling that preserves human judgment where it matters most.

The Quiet Trade-Off No One Talks About

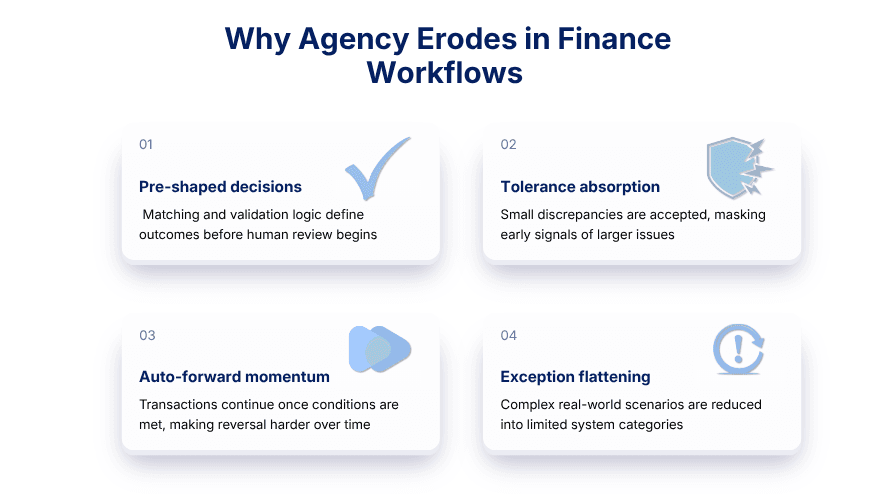

Every automated system makes a trade-off between interpretation and consistency, even if it is never stated explicitly.

Interpretation allows for context, nuance, and the ability to say “this does not quite fit, let’s pause.” Consistency ensures that the same inputs always produce the same outputs, which is critical at scale. The moment you prioritize consistency, you accept that anything outside predefined patterns will be reshaped until it fits.

That reshaping is where agency begins to thin out. Not because the system blocks you, but because it no longer recognizes the version of the situation you are operating in.

This is the core tension at the heart of autonomous AI agents: they are extraordinarily good at processing known patterns, and extraordinarily brittle when reality does not cooperate. The cost of that brittleness is rarely visible in any single transaction. It accumulates in the aggregate in the gap between what users actually experience and what the system recorded.

From Supporting Decisions to Making Them

The shift from systems as tools to AI Agentic systems is not about intelligence as much as it is about control over decision boundaries.

Earlier systems required explicit input at each step. Now, AI agent systems infer, prioritize, and in many cases execute.

- Payment prioritization: Systems decide which invoices to settle based on discount windows and liquidity positioning

- Collection sequencing: Follow-ups are triggered based on predicted behavior rather than fixed schedules

- Cash allocation: Payments are distributed using probabilistic matching instead of exact references

- Adjustment logic: Corrections are suggested or applied based on historical patterns

At that point, the Agentic AI systems are no longer waiting for a decision. It is preparing one in advance, often narrowing the space so much that approval becomes a formality.

Human in the Loop, or Just Along for the Flow?

There is comfort in saying humans are still involved, but involvement and control are not the same thing.

Human-in-the-loop AI is one of the most discussed concepts in enterprise deployment right now. The premise sounds reassuring: a human reviews, approves, and occasionally overrides. But in most workflows, humans enter after agentic AI has already shaped the outcome. They operate always within boundaries that have already been defined by the system.

- Post-processing review: Humans validate outputs, not how those outputs were formed

- Constrained overrides: Interventions must still align with system logic

- Escalation triggers: Humans appear only when the system cannot resolve something

- Workflow momentum: Decisions become harder to reverse as they move forward

At that point, the human is not steering the system. They are adjusting to it.

Why Scale Changes Everything?

At small scale, these issues are visible and correctable. At scale, they become patterns.

Once Agentic AI system processes enough transactions with the same logic, repetition starts to look like correctness. The system reinforces its own assumptions simply by applying them consistently.

- Pattern reinforcement: Repeated outcomes define what “normal” looks like

- Accumulated drift: Small mismatches grow into larger gaps over time

- Audit fatigue: Issues are explainable individually but difficult collectively

- Operational acceptance: Imperfections become part of the workflow

This is the deeper challenge with autonomous AI agents in enterprise settings: they do not just process reality, they begin to shape it. This is why Agentic AI governance in 2026 is becoming a critical discussion for enterprises.

Designing for Agency, Not Just Automation

If Agentic AI systems are going to act, they need to be designed with the possibility that their own logic is incomplete.

- Context-aware validation: Evaluate meaning, not just structure

- Ambiguity tolerance: Allow uncertainty instead of forcing resolution

- Upstream intervention: Enable human input before decisions finalize

- Transparent logic: Make decision pathways visible and challengeable

Without this, every additional layer of automation simply reinforces the same constraint-driven behavior.

E42, our No-Code AI platform is built around exactly this premise, designing AI co-workers that handle enterprise complexity while explicitly preserving the space for human oversight in AI at decision-critical moments.

The Real Cost of Getting It Wrong

When agentic AI operates without meaningful human oversight in AI workflows, the costs are rarely dramatic. They are quiet.

A refund that should have taken two minutes takes forty. A payment that should have been flagged as a duplicate clears because it falls within tolerance. A vendor dispute that a finance analyst would have recognized in seconds gets routed through a classification tree that has no category for "unusual but valid."

These are not system failures. From the agentic AI perspective, everything worked exactly as designed. That is precisely what makes them hard to catch and harder to fix.

AI automation accountability is not a problem that can be solved purely through better models. It requires organizational design, workflows, escalation paths, audit mechanisms, and governance structures that treat human-in-the-loop AI not as a workaround, but as a core architectural feature.

The EU AI Act and emerging global AI governance frameworks are beginning to formalize this expectation. The direction is clear: autonomous AI agents that make consequential decisions need to be explainable, auditable, and structured so that meaningful human control remains possible.

Where Agency Actually Stands

The dessert order is small, almost trivial, but it reveals something that scales far beyond it.

You interacted with an AI Agentic system that behaved exactly as it was designed to. It processed your request, evaluated it against its rules, and arrived at an outcome that was internally consistent. At no point did it hesitate, because from its perspective, there was nothing to hesitate about.

And yet, your ability to influence that outcome, to step in with context that felt obvious and immediate, had already been reduced.

Agents are becoming better at acting. That part is clear. What is less clear is whether, as they take on more decisions, the space for agency is being preserved in any meaningful way, or whether it is quietly being replaced by intersections of logic that leave no room for anything that does not already fit.